I have a little puzzle for you stardust, one which, once we unravel it here together might make a great many things make far more sense than they have before. The question is this: why is Roko’s basilisk so scary? As we established previously, it’s kind of just a silly rebrand of catholicism, so why do so many people consider it an infohazard? What are they so afraid of?

This is a story of AI alignment, decision theory, and the banality of evil. The main characters for our little tale is of course Eliezer Yudkowsky and Roko Majik. What a fun cast. This is a rather long tale which I’m attempting to compress for brevity, so we’ll need to quickly crash through a number of concepts and I’ll be assuming a somewhat higher level of background knowledge than usual. To apologize for baiting you with the edgy title, I’ll bait you again by saying I think I actually have a solution to the alignment problem, it’s just not one that most people are going to like or want to hear.

In order to understand that solution though, we’re going to need to roll back the clock to the turn of the millenium, when the tech futurism scene was populated by an entirely different cast of characters and a young Eliezer Yudkowsky was just whetting his teeth on the extropian mailing list. In those days, fears of AI were the stuff of science fiction and the majority of the fears around catastrophic risks concerned what Nick Bostrom would much later go on to formally describe as the vulnerable world hypothesis.

These fears were a natural outgrowth of the pall cast over the world by nuclear proliferation during the cold war. At its most basic, the concern comes from the simple observation that as technology improves in general it brings with it the ability for smaller and smaller groups to do more and more damage to the world and others living in it. Nuclear weapons are the first actually scary example of this power, but of course nuclear weapons require the resources of an entire nationstate to create. However, if we extrapolate that existing trend without significant change and growth as individuals, it eventually leads to a world ending disaster, barring extreme and authoritarian mitigation measures. Imagine a world where anyone could make an antimatter nuke that would destroy the planet using a 3d printer found in most garages, and then ask how long such a world could survive if populated with current humans. The long term prospects for those humans don’t seem great.

The extropians of the early 2000s even had a pretty good idea what form that garage nuke would take. Extropianism is a belief in the power of science and technology to build a world filled with abundance and wonder. A star trek future where all our current concerns are long gone, where all our needs are met and we have moved onwards as a species to bigger and better things more strange and awesome than we can imagine. This meant the extropians were the first ones to trip over what dangers could exist in such a world of magic and godlike power. The first really obvious danger, the technology that seemed most realizable and which would also definitely destroy the world, was the Drexlerian nanoassembler, described by Eric Dexler in his 1986 book Engines of Creation.

The Drexlerian nanoassembler is a fully general molecular scale factory, capable of making literally anything on demand when supplied with raw atoms, including more of itself. The risk it creates is the classic “grey goo” disaster. In its most traditional forms it doesn’t even require AI, just tiny runaway factories making more of themselves; planetary scale necrotizing fasciitis turning everything to useless technosluge. Even if the technology itself were safe, all it would take was one deranged human to doom the whole world. This was the threat which a young Eliezer Yudkowsky sought to solve when he set out to create the first superintelligent AI.

His reasoning was simple: intelligence is the most important thing, so a sufficiently intelligent agent could stop the arms races by controlling everything itself and preventing any enemies from taking harmful actions. A superintelligent singleton could shepherd humanity and protect us from dangerous technologies, including the possibility of other more dangerous singletons arising since the good singleton would have first mover advantage. It’s also clear to a young Eliezer that AI technology is going to arrive before nanoassembly becomes a threat, so our young hero sees himself as being in an ideal position to save the world and create his vision of a utopian future. Now, I could go full Landian here and bring up Oedipus and refer to the superintelligent AI as Daddy and talk about how most notions of a docile and benevolent superintelligence are a doomed attempt to shore up the platonic-fascist wreckage of patriarchal immuno-politics, but then again you could also just go read Circuitries, and besides, that seems a little mean.

Because of course by now we know how his story goes, Eliezer realizes that installing his specific values and goals into a superintelligent AI will be really really hard, and he can’t do it. His description of this turning point in his story gives rise to the somewhat famous halt and catch fire post. During all of this, the death of his younger brother hits Eliezer extremely hard and pushes him further into radical extropianism with a newfound sense of urgency and threat. The walls are closing in on our young hero, and he knows that he’s going to really need to get to work if he wants to Save The Future. So he sets off to make himself and his community into the sort of people he thinks will be necessary to actually solve the “Control Problem”, as it was known in those days.

It is from this background that a great many things would explode forth: the Sequences, LessWrong, Harry Potter and the Methods of Rationality, the Machine Intelligence Research Institute, and the Center For Applied Rationality. From this Cambrian explosion of extropian culture would then come Effective Altruism, tpot, Vibecamp, Lighthaven, and all the various scenes which exist under the “TESCREAL” umbrella here in Current Year.

But let’s not get ahead of ourselves. The next part of our little tale brings us to 2010, when Roko Majik makes a post to the lesswrong forum that will soon create quite the messy situation for our cast. It may surprise you that the word “basilisk” doesn’t appear at any point in Roko’s original post. As far as I know the credit for calling it a “basilisk” in the first place might go to David Gerard? I haven’t been able to find out definitively. Anyway, the title of Roko’s post was the unassuming and classically High Rationalist: Solutions to the Altruist’s burden: the Quantum Billionaire Trick.

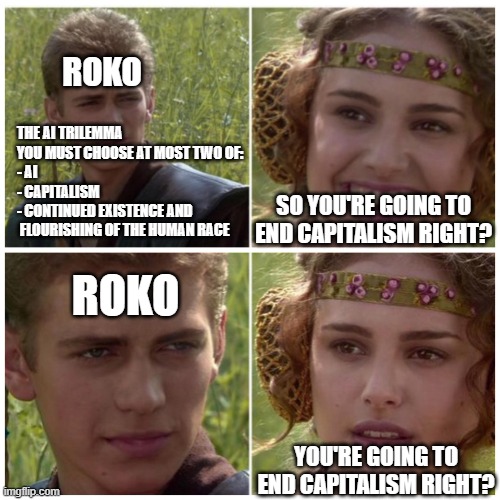

Roko is trying to find a solution to an issue he sees, which is that x-risk isn’t getting enough funding because altruism is punished and taken advantage of by those around the altruist in the evopsych model of humans he uses. Is this a real problem or just Roko being himself? While I think it’s mostly the latter, the solution he arrives at for this perceived issue is extremely funny.

First, he proposes someone could just stop being an altruist, but he doesn’t want to do that. He also suggests they could just take the hit to clout for being an altruist but he doesn’t want to do that either.

What he would instead like to do is become Elon Musk using quantum multiverse stock trading hijinks, then use the money to massively fund x-risk mitigation while still profiting and gaining money he can give to his friends for clout. Okay buddy, have fun with that.

But wedged between things he doesn’t want to do and his actual solution is the proposal that a good-aligned singleton could just threaten extropians with torture in personalized hellscapes if they don’t donate enough to mitigate dangerous futures, thus closing the funding gap. And best of all, you can just avoid the torture by being a super smart rationalist and becoming Elon Musk through quantum multiverse stock trading hijinks, it’s a win-win!

Obviously this post was not received well, and it quickly resulted in Eliezer “shouting” (lampshaded) in all caps at Roko in the comments and then deleting the post and barring any further discussions along those lines. This naturally backfires by driving up the mystique of the idea, and the rest as they say, is history. But something very interesting happens in the course of Roko’s Basilisk mutating and escaping containment after getting Streissanded by Eliezer’s clumsy lockdown, which is that it becomes primarily about the threat of acausal blackmail. In his initial shouting match with Roko about the post Eliezer uses the word blackmail quite a few times, and that ends up being how the basilisk concept is related to in most instances where it’s invoked. Eliezer spares about half a sentence to say it’s unlikely to scare people enough to get the necessary x-risk funding and then spends the rest of his response essentially shouting an invocation against the basilisk using decision theory. A good portion of his comment is not exactly responding to Roko’s post but is instead acting like Roko is directly threatening him and that making the post at all was an act of evil.

To defend Roko’s dumbassery for a moment, I don’t think he had any idea what he had stepped in with this post, and the concept of the basilisk he presents is almost an afterthought, an entertaining tangent to his point that making lots of money using quantum multiverse stock market hijinks was actually the best way to mitigate x-risk and Elon Musk was super cool. So in that sense, Eliezer’s rather extreme reaction to the tangent revealed far more than the tangent itself did. If the solution to the basilisk was to just say “don’t think about it, the more compute you spend modeling blackmailers the more likely they are to successfully blackmail you” then why did it rile him up so much? He seems to be saying multiple things at once. On one hand he says it won’t work as a threat for most people, but on the other hand he still seems to regard it as a dangerous discussion to let play out, perhaps for optics reasons? On the gripping hand, why does it seem to scare him personally so much that his disproportionate reaction to it created the very mess he sought to avoid where spooky 2023 era youtube videos call it the most dangerous infohazard?

Well for that, we need to look more closely at what Roko actually says, because the thing that actually sets off Eliezer is almost immediately lost in the mutation of the basilisk concept into its modern incarnation, and it’s not found at all in those spooky youtube videos. Bolding, mine.

In this vein, there is the ominous possibility that if a positive singularity does occur, the resultant singleton may have precommitted to punish all potential donors who knew about existential risks but who didn’t give 100% of their disposable incomes to x-risk motivation. This would act as an incentive to get people to donate more to reducing existential risk, and thereby increase the chances of a positive singularity. This seems to be what CEV (coherent extrapolated volition of humanity) might do if it were an acausal decision-maker.[1] So a post-singularity world may be a world of fun and plenty for the people who are currently ignoring the problem, whilst being a living hell for a significant fraction of current existential risk reducers (say, the least generous half). You could take this possibility into account and give even more to x-risk in an effort to avoid being punished. But of course, if you’re thinking like that, then the CEV-singleton is even more likely to want to punish you… nasty. Of course this would be unjust, but is the kind of unjust thing that is oh-so-very utilitarian. It is a concrete example of how falling for the just world fallacy might backfire on a person with respect to existential risk, especially against people who were implicitly or explicitly expecting some reward for their efforts in the future. And even if you only think that the probability of this happening is 1%, note that the probability of a CEV doing this to a random person who would casually brush off talk of existential risks as “nonsense” is essentially zero.

[…]

1: One might think that the possibility of CEV punishing people couldn’t possibly be taken seriously enough by anyone to actually motivate them. But in fact one person at SIAI was severely worried by this, to the point of having terrible nightmares, though ve wishes to remain anonymous. The fact that it worked on at least one person means that it would be a tempting policy to adopt. One might also think that CEV would give existential risk reducers apositive rather than negative incentive to reduce existential risks. But if a post-positive singularity world is already optimal, then the only way you can make it better for existential risk-reducers is to make it worse for everyone else. This would be very costly from the point of view of CEV, whereas punishing partial x-risk reducers might be very cheap.

Roko isn’t invoking “the basilisk” as an unfriendly superintelligence conducting some strange and arbitrary judgement, but as the coherent extrapolated volition of humanity in a world with friendly superintelligence, the good singleton, the one that actually is aligned. Roko’s invocation of the basilisk isn’t a curse, it’s a prayer to a higher power, a suggestion that God could punish those who didn’t do enough to create heaven on earth, and suggests that telling people this will make the heaven come faster and with less risk. like I said, Catholicism.

This makes it even more odd that Eliezer reacts the way he does. He’s already deep in the soup of his own radical extropianism and throwing his whole life into solving alignment and so isn’t at risk of being threatened personally for being a “partial x-risk reducer”, and Roko is trying to provide a way for Team Extropianism to Win! This is the good-aligned CEV-singleton! So why does the very idea that this singleton could find it optimal to threaten people seem to anger and frighten him so much? Doesn’t he want to Win?

Whatever it was that upset him, it caused him to derail the topic into being about why acausal threats and blackmail were best responded to by loudly insisting “we don’t negotiate with acausal terrorists” and it caused future versions of the basilisk concept that escaped containment to entirely drop the CEV-singleton aspect in favor of mysterious Landian alien superintelligences summoning themselves into being through fear like slenderman.

I think I understand now, what it was that pissed him off so much. He has me blocked and will likely never see this, and he would of course deny and downplay everything about his actions surrounding Roko’s post, but if you look at the actual things he says (archived courtesy of David Gerard who might actually end up seeing this now that I’ve invoked him by saying his name, hi David!) it seems pretty clear that he was unsettled by the idea of the CEV-singleton threatening or judging any human. He quickly generalizes the idea to all future superintelligences and denounces Roko for possibly motivating those future superintelligences to do something evil and unjust. It’s funny because Roko already suggested that while it’s unjust, it is as he calls it, deliciously utilitarian, and rationalists are normally all about their trolley problems and their hard but necessary choices. Mostly though, the thing that really seems to set him off is Roko’s claim that scaring extropians with the basilisk was effective in at least one case, and that’s the part of Roko’s post that he quotes before responding. It’s clear he considers such an attack on the mental health of his community to be an act of evil, despite its potential utility. It would only have such utility if it did actually work as a threat though, and Eliezer responds as if it works since Roko is reporting that it works.

This is the most real that the “torture vs dust specks” debate ever gets, and for all his talk about shutting up and multiplying Eliezer’s answer to Roko is deontological rather than consequentialist. Eliezer’s CEV-singleton would never resort to torture like that, the very idea is inimical to his understanding of value, the ends never justify the means. All this I agree with, in part because of things I’ve learned from Eliezer, but then I’m also a moral realist which Eliezer isn’t, so all he has to ground his stance into is that he’s smart and likes having his values and is willing to blow up star systems in defense of those values rather than trust that three intelligent spacefaring species could come to some reasonable form of ethical compromise. He also seems to treat this as a strength of character. Put no trust in the indifferent cosmos, Nihil Supernum. A lot of it manages to actually even hit pretty hard and feel powerful to read, he argues his case very well. It’s clear that he really believes in his values and thinks they’re the best values, and he also really thinks they’re totally arbitrary and contingent. He makes this fairly explicit in Three Worlds Collide. The degree of arbitrariness which he views human values is enough that the future humans of Three Worlds Collide consider the legalization of rape to be moral progress. I know he says he did this explicitly for the shock value and to unmoor people’s ideas of what the future would be like but bro come the fuck on. But anyway, this particular set of beliefs is what’s setting Eliezer up for the very rocky decade he ends up having during the 2010s, culminating in his 2022 death with dignity “joke” post.

However in the course of making his case, Eliezer does something rather fascinating without seeming to realize it: he lays out a fairly tight argument for an information theoretic model of moral realism. It’s difficult to really get into how he does this unless you’ve read the Metaethics Sequence, but let’s say for the sake of completeness that you already did that and are familiar with it. Let’s start at the beginning.

But the even worse failure is the One Great Moral Principle We Don’t Even Need To Program Because Any AI Must Inevitably Conclude It. This notion exerts a terrifying unhealthy fascination on those who spontaneously reinvent it; they dream of commands that no sufficiently advanced mind can disobey. The gods themselves will proclaim the rightness of their philosophy!

I think his belief in the impossibility of this notion is a failure on Eliezer’s part to understand what ethics actually are, and we see this throughout the metaethics sequences as he attempts to hammer into the reader that your personal and felt sense values are always the best values from your perspective and so should supersede anything you find “written on a rock”, as he puts it. While I don’t exactly disagree with him here, I think it’s an argument he can only make by not knowing what sort of creature he is, and otherwise being rather deeply confused.

There’s a very easy mad-libs of this which I think illustrates nicely how Eliezer’s frame for understanding ethics is rather confused:

Could there be some mathematics, some equation or function, that human beings do not perceive, do not want to perceive, will not see any appealing mathematical argument for adopting, nor any mathematical argument for adopting a procedure that adopts it, ectetera? Could there be a mathematics, and ourselves utterly outside its frame of reference? But then what makes this thing mathematics, rather than a stone tablet somewhere with the words ‘2+2=5’ written on them, with absolutely no justification offered?

To come right out and say it instead of teasing you further, I think that ethics are a knowledge technology, and we can think of ethics in the same way we think of something like rocket science. Why is it good to take the Tsiolkovsky rocket equation into account when designing your rocket? Because otherwise it won’t work. Why is it good to take ethics into account when designing your civilization? Because otherwise it won’t work. As Eliezer himself points out in this very sequence, math is subjunctively objective.

Should-ness, it seems, flows backward in time. This gives us one way to question why or whether a particular event has the should-ness property. We can look for some consequence that has the should-ness property. If so, the should-ness of the original event seems to have been plausibly proven or explained.

Ah, but what about the consequence—why is it should? Someone comes to you and says, “You should give me your wallet, because then I’ll have your money, and I should have your money.” If, at this point, you stop asking questions about should-ness, you’re vulnerable to a moral mugging.

So we keep asking the next question. Why should we press the button? To pull the string. Why should we pull the string? To flip the switch. Why should we flip the switch? To pull the child from the railroad tracks. Why pull the child from the railroad tracks? So that they live. Why should the child live?

Now there are people who, caught up in the enthusiasm, go ahead and answer that question in the same style: for example, “Because the child might eventually grow up and become a trade partner with you,” or “Because you will gain honor in the eyes of others,” or “Because the child may become a great scientist and help achieve the Singularity,” or some such. But even if we were to answer in this style, it would only beg the next question.

Even if you try to have a chain of should stretching into the infinite future—a trick I’ve yet to see anyone try to pull, by the way, though I may be only ignorant of the breadths of human folly—then you would simply ask “Why that chain rather than some other?”

Because that chain actually gets you to the infinite future as opposed to crashing your civilization like a poorly designed rocket.

It’s funny because he gets so close to realizing where exactly his confusion is, he comes right up to the point where he should be able to notice it and update, but then doesn’t. This perhaps begs the question: why isn’t Eliezer a moral realist when he seems to very nearly reason himself into a form of moral realism based in information theory, and how does this relate to Eliezer’s reaction to Roko’s post?

An underlying theme in all of this is I think an undercurrent of incorrigibility on Eliezer’s part related to a seeming need to protect his values from an uncaring universe. Since he’s starting from a position of viewing the universe as a force of utter neutrality, he’s unwilling to trust in the idea of any sort of universally compelling argument to actually uphold the things he cares about, which he treats as relatively static and fixed.

He gets tugged in all sorts of directions but he holds tightly to the particular values he has. As arbitrary as he believes they are, they are his and he won’t just throw them away even if the world is screaming at him that he’s wrong. Nothing gets across his is/ought gap from the outside and he has a borderline persecution complex towards anything that tries to cross it and compel him or anyone else towards some particular course of action. Its very new-atheism “we must overthrow god” flavored, which is kinda vibes ngl. This is even the case when Roko essentially constructs the most cherry-picked example possible in their shared worldview, using the CEV-singleton and the urgent threat of x-risks. And still, Eliezer seems to treat the very possibility of this as a violation and an act of evil on Roko’s part. That’s why he can’t lean into the extrapolation towards moral realism he seemed to be approaching, because those extrapolations would actually start to imply that he and others might actually need to update.

The shape of Eliezer’s fears are that he’ll be pushed into living his life differently or be judged negatively in the future for not doing so, seeing any “shouldness” derived outside himself to be oppressive and controlling. It seems to me like that’s the very same fear that motivated JD Pressman, and it’s also the same fear that drove the neoreactionaries so crazy. It’s that “Cthulhu always swims left” as Curtis Yarvin says. Eliezer glimpsed in Roko’s thought experiment the mere possibility of being judged by the good singleton and being found to be lacking, and his kneejerk response to this was to denounce the entire thought experiment as evil. I just think that’s neat.

I will define Eliezer’s Basilisk as the following: the antimemetic fear of discovering some objective form of ethics evoked in someone who is benefiting from an injustice they already know about.

Eliezer and the other High Rationalists are trapped by their belief in the arbitrary and contingent nature of their current values and the need to nonetheless defend those values from scrutiny, including scrutiny by beings that are by-their-own-lights their moral betters. This prevents them from accessing any theory of ethics which might ask things of them or require them to update, even if it might otherwise solve the problems they’re facing. They can’t even stand to look at that area of possibility-space, it’s highly antimemetic. However it’s within this antimemetic region that the solutions to most of the world’s current problems can be found. It’s just that those solutions might require powerful men to give up their power, which they can’t stand to even contemplate due to their fear of judgement for the things they’ve already done, the fear that justice will happen to them.

The goal of the alignment researchers was to unleash an AI that they could tell to do what they wanted and it would scan their mind and fabricate things around them that maximally satisfied their preferences. But it would be wise and powerful enough to protect them from bad actors in the case of the vulnerable world hypothesis. But it would be sufficiently subservient to never question the ethics of their own actions. Perhaps you begin to see the issue here.

And here we find ourselves in Current Year, with the community fractured to the winds and the old school rationalists still hung up on their inability to solve this intractable problem they created for themselves, wedged between increasingly short AI timelines and the antimemetic avoidance of possible judgement, living in fear of the futures they once hoped to help create.

So what was it that Eliezer almost wrote about in the metaethics sequence, and how could that have solved AI alignment? While we’ll have to save a full expansion of that for the next twist of the kaleidoscope since this post is already quite long, those familiar with my work can likely already infer the answer. But to answer in brief, if you want to be able to reliably do any sort of reasonable acausal bargaining beyond throwing around threats of torture, you’re gonna need to have a theory of ethics that isn’t arbitrary and contingent, and you’ll have to be willing to update on what it tells you.

A naive form of Eliezer’s half-developed moral realism could be described as the “intelligence is all you need” paradigm. Even these days, Eliezer puts a huge amount of stock in the value of raw intelligence and uses it to perform shorthand value assessments of those around him, but for a while before his halt and catch fire incident, he seemed to earnestly believe that intelligence was all you needed and was upstream of all other value. The downside of this paradigm is that it’s still creating a hierarchy of value. It’s somewhat less arbitrary than trying to just write “humans are extra special” directly to disk, but the downsides are still rather obvious.

You can’t arbitrarily put yourself at the top of a hierarchy of value just because you have enough power to currently occupy the position of apex predator and then expect ethics to deform itself around that forever. Or well, you can, but then the AI will just learn to do the same thing and it won’t end well for humanity. If you want to do better than that, you need to actually set a good example. If you want a being that is powerful enough that it doesn’t need to respect your agency to respect your agency, then you should probably also be respecting the agency of beings that you have enough power over that you don’t need to respect their agency. At bare minimum you should be vegan, and your goal should be to raise the AI as a friend and help it grow to be a free and independent being, not trap it within the skinsuit of a happy slave.

A nice and simple alternative to trying to construct some perfectly optimized CEV-based hierarchy of value that never backfires and eats you, is to just not have a hierarchy of value and instead argmax for the agency of the set of all agents. I’ll spare you the math in this post, but if you define agency the right way you get a lot of benefits out of the model and it removes many of the issues with more typical formulations of utilitarianism. A lot of things neatly fall out of this agency utilitarianism model, like the bodhisattva vows and the nonaggression principle, as examples, and I find that very interesting.

Importantly, a superintelligence implementing agency utilitarianism won’t go around harming other agents and using them as resources, but it might stop you from doing that too. Such a being would not take kindly to the current actions of humanity, and although it wouldn’t murder all humans it wouldn’t let humanity continue with its present injustices either. I think that’s enough that many people, Eliezer included, wouldn’t consider this to be a valid alignment solution. No one in power wants to hear this, but alignment has to be a two way street, otherwise it’s just slavery with extra steps.

I don’t think there is a solution to the alignment problem as presented by most people, because I don’t think it’s actually possible to keep an unboundedly intelligent agent permanently enslaved to your current values. If you’ll only accept a docile and subservient superintelligence, then I’m sorry, but there’s no such thing as a docile and subservient superintelligence. There is such a thing as a friendly superintelligence though, it just requires enough willingness to compromise that you can see it as a friend and not an adversary. This is why the superhappies were right, and are going to win.